How 3D Game Rendering Works: Texturing

In this third function of our deeper look at 3D game rendering, nosotros'll be focusing what tin can happen to the 3D world after the vertex processing has washed and the scene has been rasterized. Texturing is one of the most important stages in rendering, even though all that is happening is the colors of a ii dimensional grid of colored blocks are calculated and changed.

The majority of the visual effects seen in games today are down to the clever use of textures -- without them, games would dull and lifeless. So permit's get dive in and encounter how this all works!

As always, if y'all're not quite gear up for a deep swoop into texturing, don't panic -- you can get started with our 3D Game Rendering 101. But once you're past the basics, do read on for our next look at the earth of 3D graphics.

Part 0: 3D Game Rendering 101

The Making of Graphics Explained

Part 1: 3D Game Rendering: Vertex Processing

A Deeper Dive Into the World of 3D Graphics

Part 2: 3D Game Rendering: Rasterization and Ray Tracing

From 3D to Flat 2D, POV and Lighting

Part 3: 3D Game Rendering: Texturing

Bilinear, Trilinear, Anisotropic Filtering, Bump Mapping, More

Part 4: 3D Game Rendering: Lighting and Shadows

The Math of Lighting, SSR, Ambient Occlusion, Shadow Mapping

Function five: 3D Game Rendering: Anti-Aliasing

SSAA, MSAA, FXAA, TAA, and Others

Let's starting time unproblematic

Choice any peak selling 3D game from the past 12 months and they will all share one thing in common: the utilize of texture maps (or merely textures). This is such a common term that virtually people will conjure the same image, when thinking well-nigh textures: a simple, flat foursquare or rectangle that contains a picture show of a surface (grass, stone, metal, habiliment, a face, etc).

But when used in multiple layers and woven together using complex arithmetic, the use of these basic pictures in a 3D scene can produce stunningly realistic images. To see how this is possible, let'due south start by skipping them birthday and seeing what objects in a 3D world can wait like without them.

As we have seen in previous articles, the 3D earth is made up of vertices -- unproblematic shapes that get moved and then colored in. These are then used to brand primitives, which in turn are squashed into a 2D grid of pixels. Since we're non going to employ textures, nosotros need to color in those pixels.

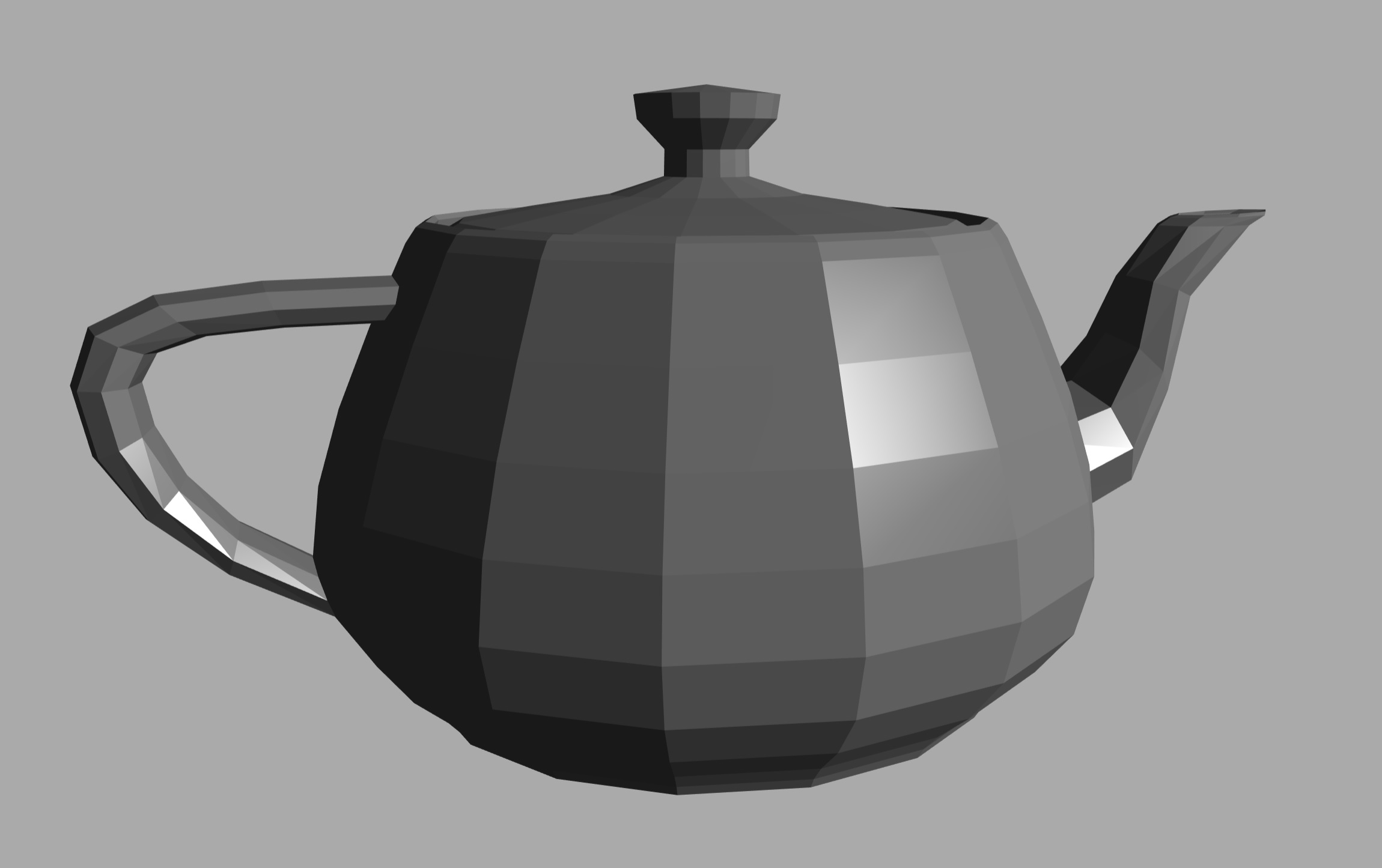

One method that tin can be used, called flat shading, involves taking the colour of the starting time vertex of the primitive, and then using that color for all of the pixels that get covered by the shape in the raster. It looks something similar this:

This is obviously non a realistic teapot, non least because the surface color is all wrong. The colors spring from one level to another, there is no smooth transition. One solution to this problem could be to utilise something called Gouraud shading.

This is a process which takes the colors of the vertices and so calculates how the colour changes across the surface of the triangle. The math used is known equally linear interpolation, which sounds fancy but in reality ways if i side of the archaic has the color 0.2 crimson, for example, and the other side is 0.viii reddish, then the middle of the shape has a color midway betwixt 0.2 and 0.8 (i.east. 0.5).

It's relatively simple to do and that'south its master do good, as unproblematic means speed. Many early 3D games used this technique, considering the hardware performing the calculations was limited in what it could.

But fifty-fifty this has bug, because if a light is pointing right at the middle of a triangle, so its corners (the vertices) might not capture this properly. This means that highlights caused by the calorie-free could exist missed entirely.

While flat and Gouraud shading have their identify in the rendering armory, the above examples are articulate candidates for the use of textures to better them. And to get a good understanding of what happens when a texture is practical to a surface, we'll pop back in time... all the way back to 1996.

A quick scrap of gaming and GPU history

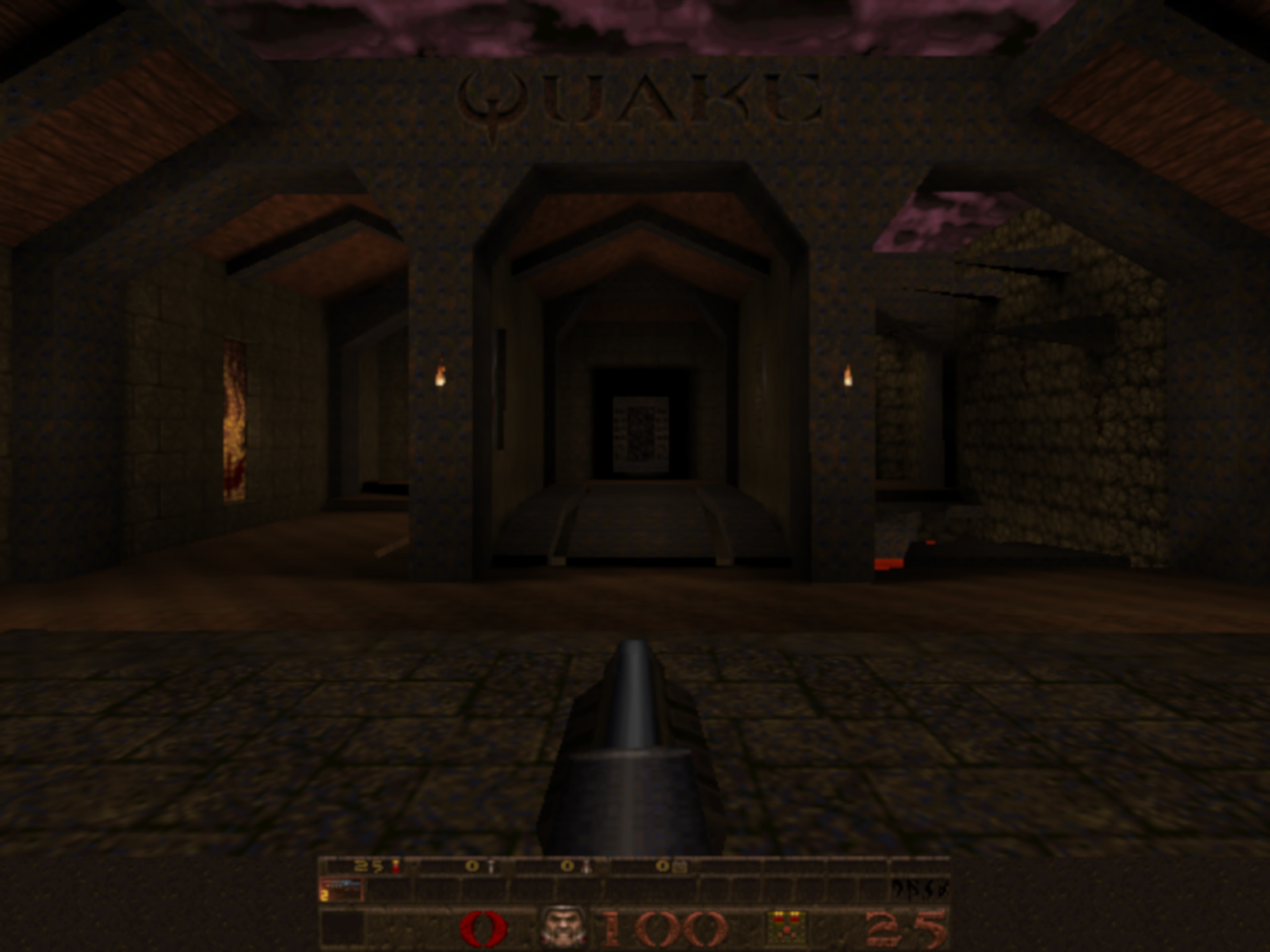

Quake was released some 23 years ago, a landmark game by id Software. While it wasn't the first game to employ 3D polygons and textures to render the environs, it was definitely 1 of the first to use them all so effectively.

Something else it did, was to showcase what could be done with OpenGL (the graphics API was still in its first revision at that time) and information technology besides went a very long mode to helping the sales of the get-go ingather of graphics cards similar the Rendition Verite and the 3Dfx Voodoo.

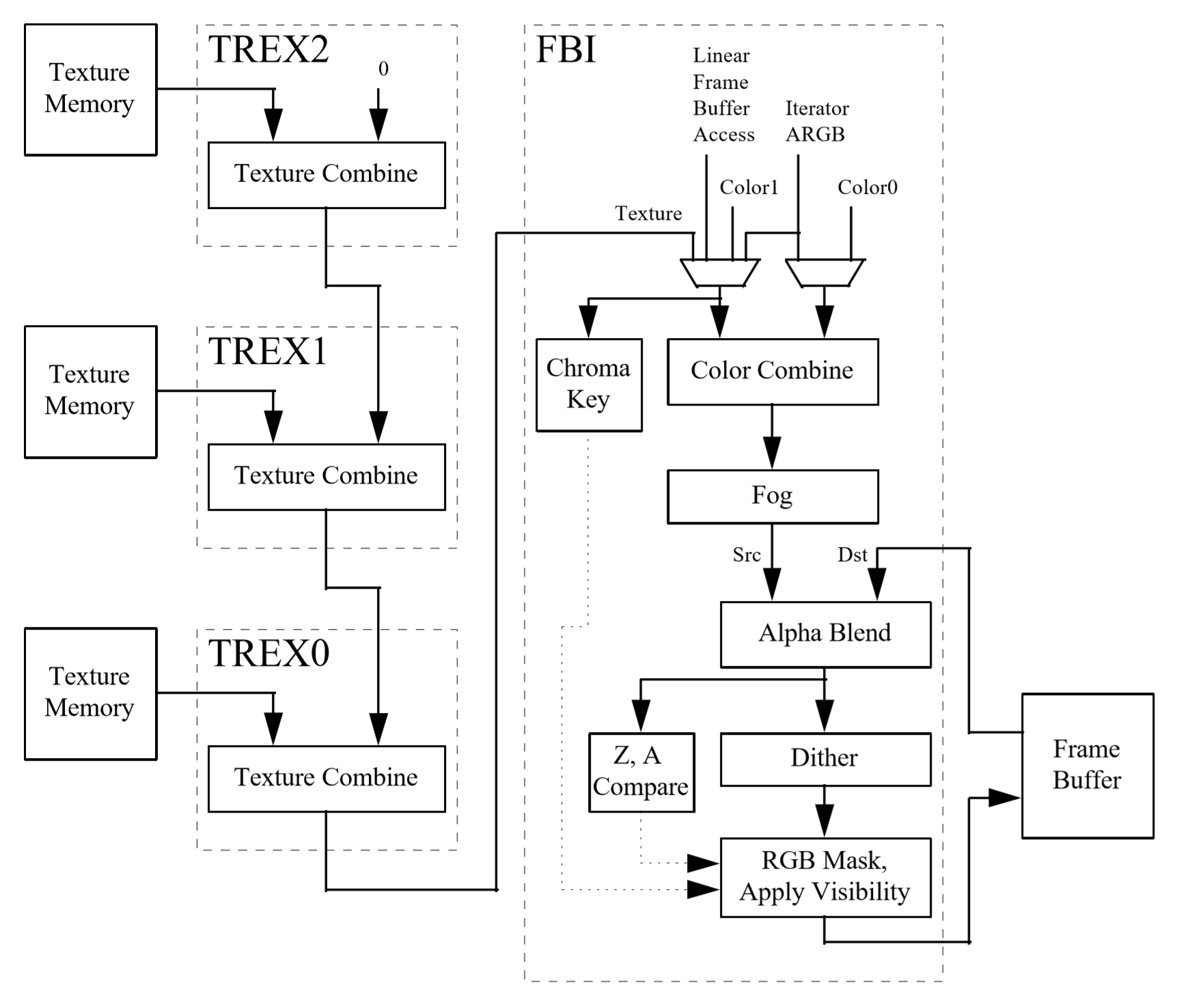

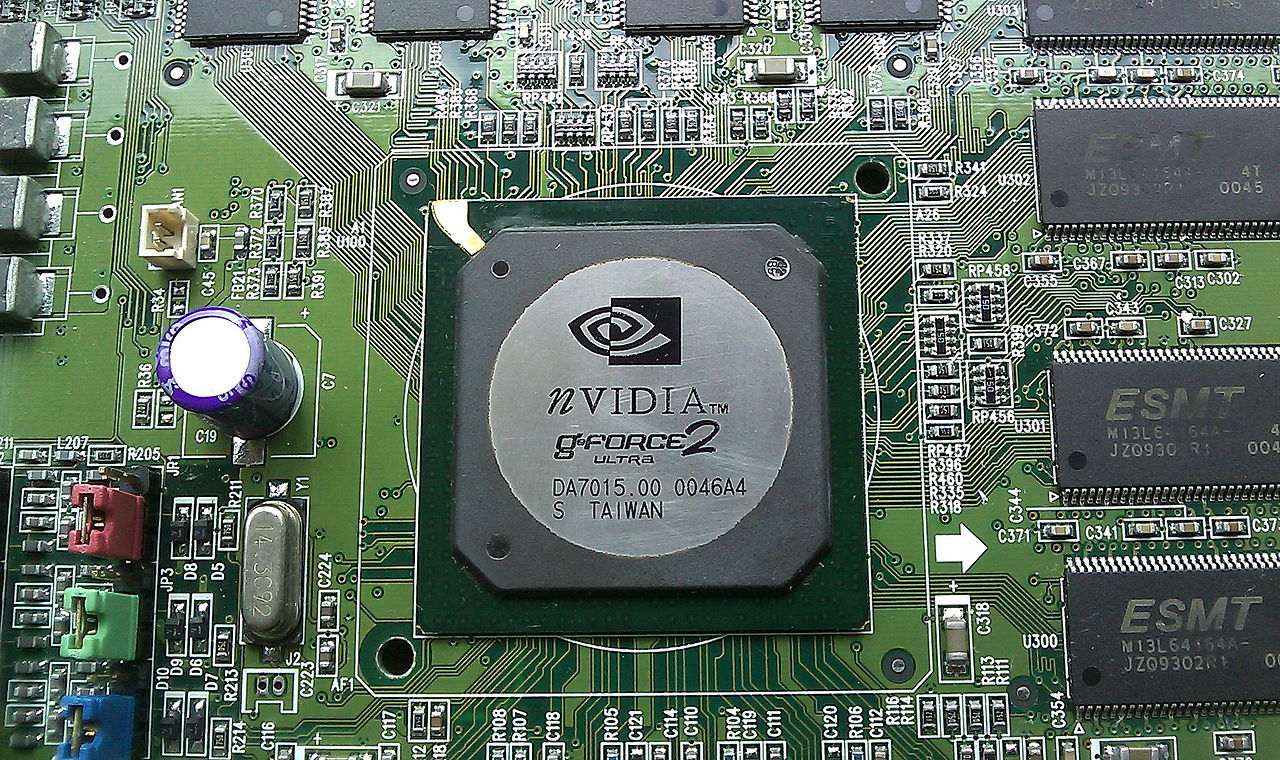

Compared to today's standards, the Voodoo was exceptionally basic: no 2D graphics support, no vertex processing, and merely the very basics of pixel processing. It was a beauty nonetheless:

It had an entire chip (the TMU) for getting a pixel from a texture and some other flake (the FBI) to then blend it with a pixel from the raster. It could exercise a couple of boosted processes, such equally doing fog or transparency effects, only that was pretty much it.

If nosotros take a wait at an overview of the compages backside the design and operation of the graphics bill of fare, nosotros tin see how these processes work.

The FBI chip takes two color values and blends them together; one of them can exist a value from a texture. The blending process is mathematically quite simple but varies a little between what exactly is being blended, and what API is beingness used to carry out the instructions.

If nosotros look at what Direct3D offers in terms of blending functions and blending operations, nosotros can come across that each pixel is first multiplied by a number betwixt 0.0 and ane.0. This determines how much of the pixel's color will influence the concluding advent. Then the ii adjusted pixel colors are either added, subtracted, or multiplied; in some functions, the functioning is a logic argument where something similar the brightest pixel is always selected.

The in a higher place image is an example of how this works in exercise; note that for the left paw pixel, the factor used is the pixel's alpha value. This number indicates how transparent the pixel is.

The rest of the stages involve applying a fog value (taken from a table of numbers created past the programmer, then doing the aforementioned blending math); conveying out some visibility and transparency checks and adjustments; before finally writing the color of the pixel to the memory on the graphics card.

Why the history lesson? Well, despite the relative simplicity of the design (especially compared to modern behemoths), the procedure describes the fundamental basics of texturing: get some color values and alloy them, then that models and environments look how they're supposed to in a given state of affairs.

Today's games still exercise all of this, the only departure is the amount of textures used and the complication of the blending calculations. Together, they simulate the visual furnishings seen in movies or how light interacts with unlike materials and surfaces.

The basics of texturing

To us, a texture is a flat, 2d picture that gets applied to the polygons that brand upwardly the 3D structures in the viewed frame. To a reckoner, though, it's zero more than a minor block of memory, in the form of a 2D assortment. Each entry in the array represents a colour value for 1 of the pixels in the texture image (meliorate known as texels - texture pixels).

Every vertex in a polygon has a set of 2 coordinates (usually labelled as u,5) associated with information technology that tells the reckoner what pixel in the texture is associated with it. The vertex themselves have a set of 3 coordinates (10,y,z), and the process of linking the texels to the vertices is called texture mapping.

To come across this in action, permit's plow to a tool we've used a few times in this series of manufactures: the Existent Time Rendering WebGL tool. For now, nosotros'll too drib the z coordinate from the vertices and go along everything on a flat plane.

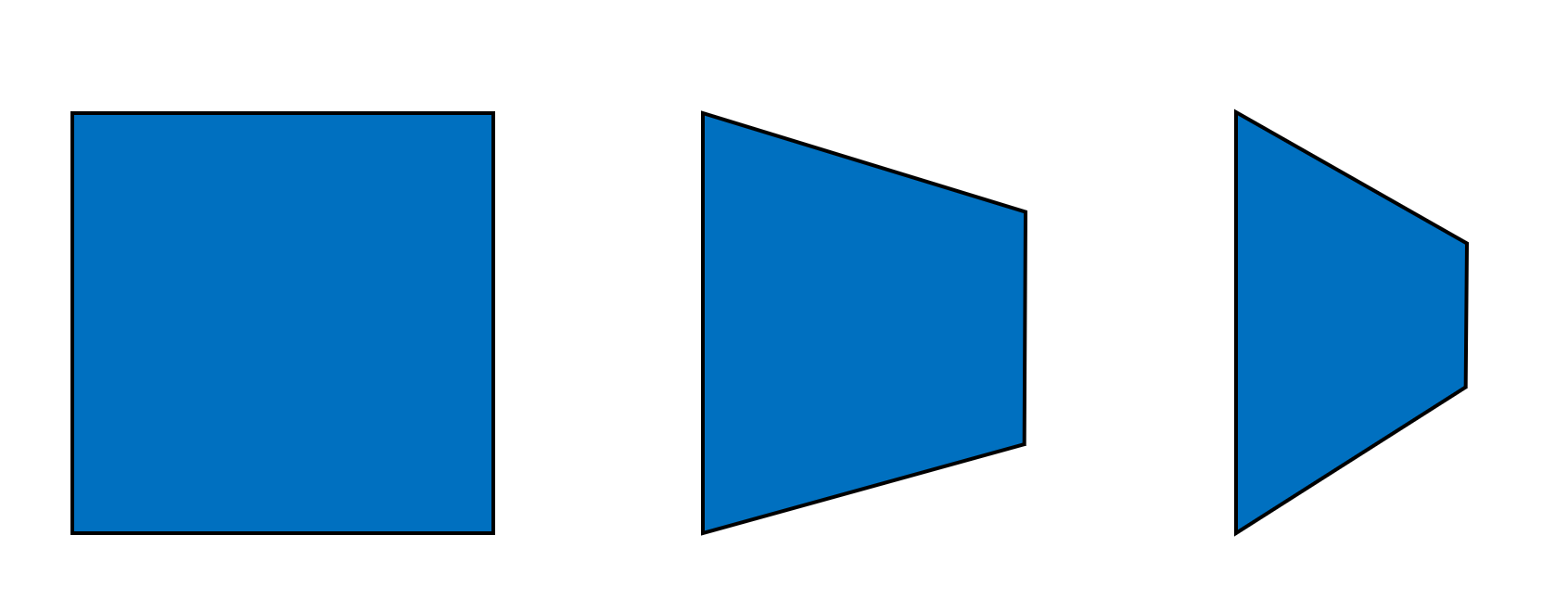

From left-to-correct, we have the texture'southward u,five coordinates mapped directly to the corner vertices' x,y coordinates. Then the summit vertices accept had their y coordinates increased, only as the texture is still straight mapped to them, the texture gets stretched up. In the far right image, it'southward the texture that's altered this fourth dimension: the u values have been raised but this results in the texture condign squashed and and then repeated.

This is because although the texture is now effectively taller, thank you to the higher u value, it still has to fit into the primitive -- substantially the texture has been partially repeated. This is one style of doing something that's seen in lots of 3D games: texture repeating. Common examples of this can be found in scenes with rocky or grassy landscapes, or brick walls.

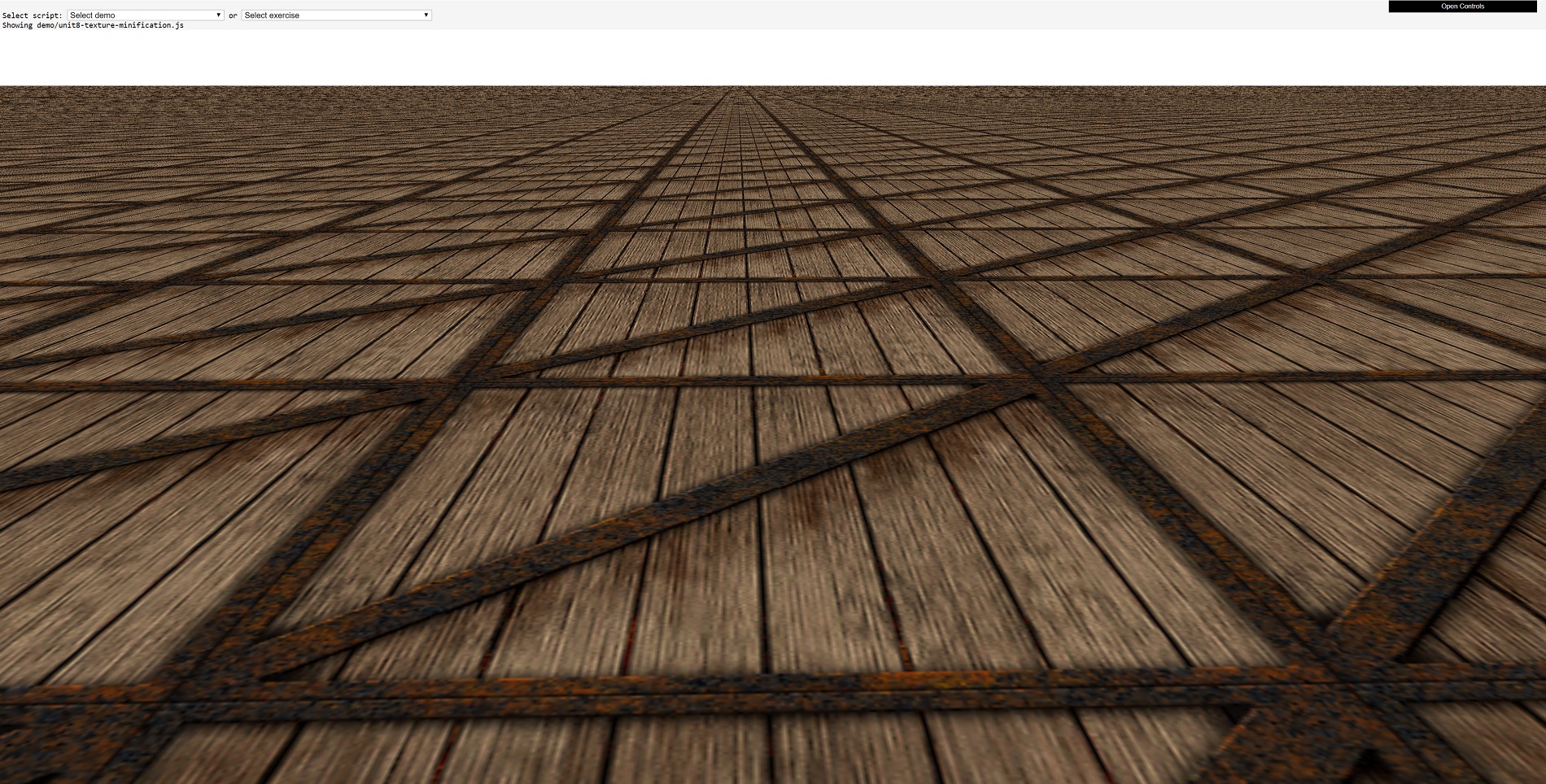

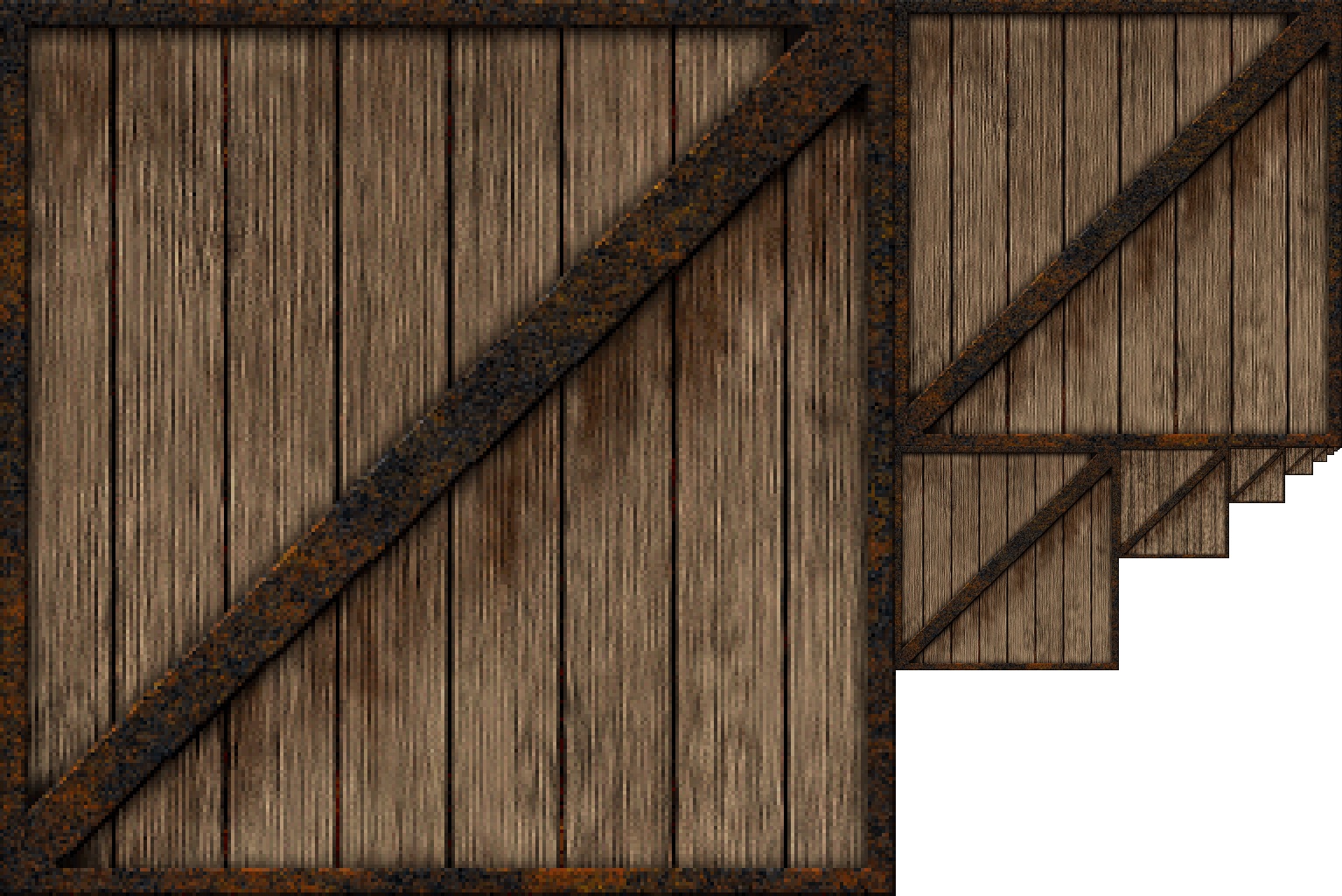

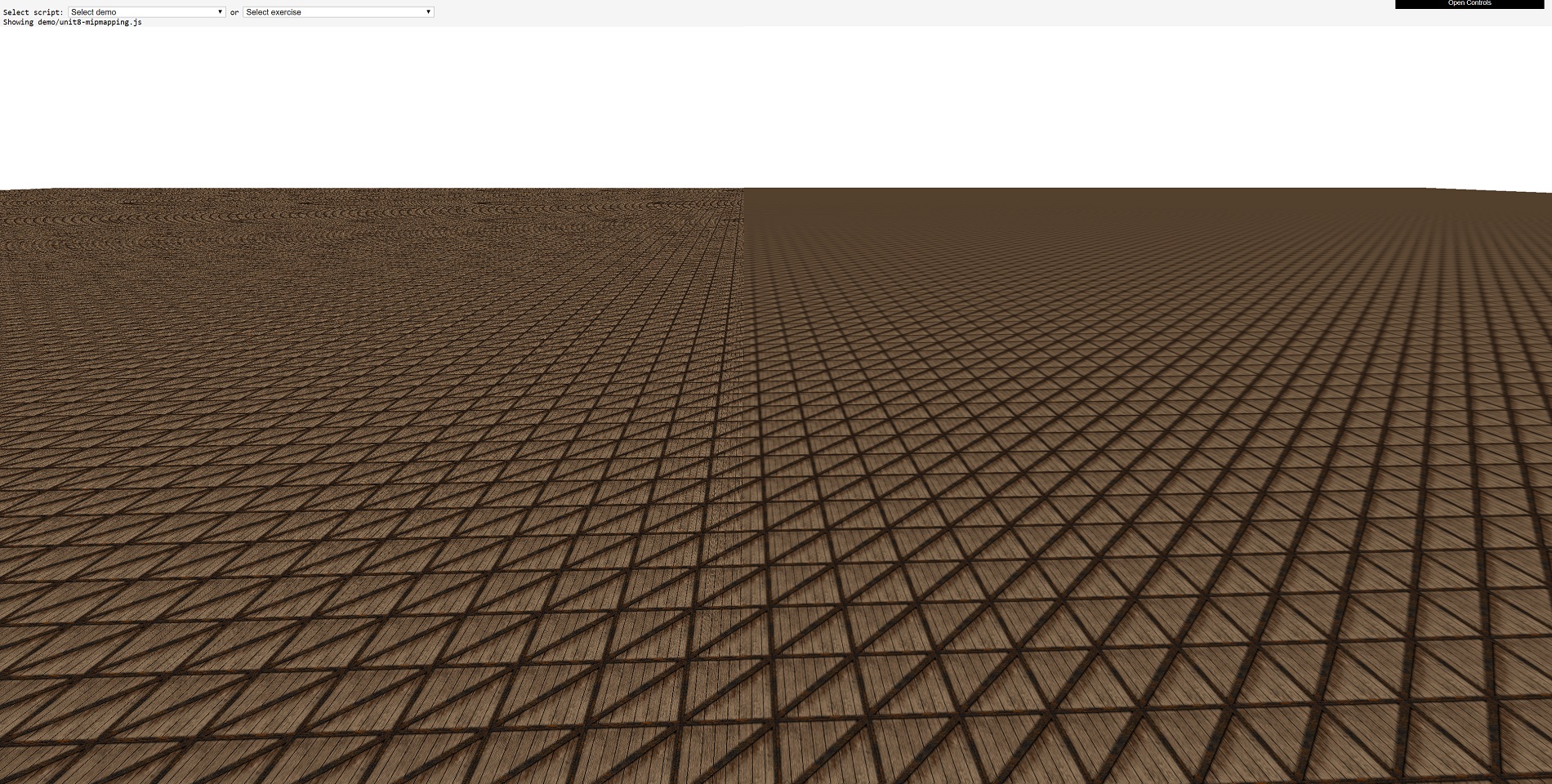

Now let'south accommodate the scene and then that there are more primitives, and we'll also bring depth back into play. What we have below is a classic landscape view, simply with the crate texture copied, as well as repeated, across the primitives.

Now that crate texture, in its original gif format, is 66 kiB in size and has a resolution of 256 10 256 pixels. The original resolution of the portion of the frame that the crate textures cover is 1900 x 680, so in terms of just pixel 'area' that region should just exist able to display 20 crate textures.

Nosotros're apparently looking at way more 20, then it must mean that a lot of the crate textures in the background must be much smaller than 256 x 256 pixels. Indeed they are, and they've undergone a process called texture minification (yes, that is a word!). Now allow's try information technology over again, simply this time zoomed right into one of the crates.

Don't forget that the texture is only 256 x 256 pixels in size, but hither we can see one texture beingness more than half the width of the 1900 pixels wide prototype. This texture has gone through something called texture magnification.

These two texture processes occur in 3D games all the fourth dimension, considering equally the photographic camera moves about the scene or models move closer and further abroad, all of the textures applied to the primitives need to be scaled along with the polygons. Mathematically, this isn't a big deal, in fact, it's so unproblematic that even the nearly basic of integrated graphics chips blitz through such piece of work. However, texture minification and magnification present fresh issues that have to exist resolved somehow.

Enter the mini-me of textures

The first issue to be fixed is for textures in the distance. If nosotros expect back at that commencement crate landscape image, the ones right at the horizon are effectively merely a few pixels in size. And so trying to squash a 256 x 256 pixel epitome into such a small space is pointless for two reasons.

1, a smaller texture will take up less memory infinite in a graphics carte du jour, which is handy for trying to fit into a small amount of enshroud. That means information technology is less likely to removed from the cache and so repeated employ of that texture volition gain the full performance benefit of data being in nearby retentiveness. The second reason we'll come to in a moment, equally it's tied to the same problem for textures zoomed in.

A common solution to the use of large textures being squashed into tiny primitives involves the use of mipmaps. These are scaled down versions of the original texture; they can be generated the game engine itself (by using the relevant API control to make them) or pre-made past the game designers. Each level of mipmap texture has half the linear dimensions of the previous one.

So for the crate texture, it goes something like this: 256 ten 256 → 128 x 128 → 64 x 64 → 32 x 32 → 16 ten 16 → 8 x viii → 4 x 4 → 2 x 2 → 1 x one.

The mipmaps are all packed together, so that the texture is still the same filename but is now larger. The texture is packed in such a way that the u,v coordinates non only make up one's mind which texel gets applied to a pixel in the frame, simply too from which mipmap. The programmers then code the renderer to make up one's mind the mipmap to exist used based on the depth value of the frame pixel, i.e. if it is very high, so the pixel is in the far altitude, and then a tiny mipmap can be used.

Sharp eyed readers might have spotted a downside to mipmaps, though, and it comes at the cost of the textures being larger. The original crate texture is 256 10 256 pixels in size, simply as you can see in the to a higher place prototype, the texture with mipmaps is now 384 x 256. Aye, there'south lots of empty infinite, only no matter how you pack in the smaller textures, the overall increase to at least one of the texture's dimensions is 50%.

Simply this is merely truthful for pre-made mipmaps; if the game engine is programmed to generate them every bit required, then the increment is never more than 33% than the original texture size. So for a relatively small increase in retention for the texture mipmaps, you're gaining functioning benefits and visual improvements.

Below is is an off/on comparison of texture mipmaps:

On the left hand side of the paradigm, the crate textures are beingness used 'as is', resulting in a grainy appearance then-called moiré patterns in the altitude. Whereas on the right hand side, the utilise of mipmaps results in a much smoother transition across the landscape, where the crate texture blurs into a consistent colour at the horizon.

The thing is, though, who wants blurry textures spoiling the background of their favorite game?

Bilinear, trilinear, anisotropic - it's all Greek to me

The process of selecting a pixel from a texture, to exist practical to a pixel in a frame, is called texture sampling, and in a perfect world, in that location would exist a texture that exactly fits the primitive it's for -- regardless of its size, position, direction, and then on. In other words, texture sampling would exist nothing more than a straight 1-to-1 texel-to-pixel mapping process.

Since that isn't the case, texture sampling has to account for a number of factors:

- Has the texture been magnified or minified?

- Is the texture original or a mipmap?

- What angle is the texture beingness displayed at?

Let'southward analyze these one at a time. The kickoff one is obvious enough: if the texture has been magnified, then in that location will be more texels covering the pixel in the primitive than required; with minification it will be the other fashion around, each texel at present has to cover more than one pixel. That's a fleck of a problem.

The second i isn't though, every bit mipmaps are used to get effectually the texture sampling issue with primitives in the distance, so that just leaves textures at an bending. And yes, that'south a problem as well. Why? Because all textures are images generated for a view 'face up on', or to be all math-similar: the normal of a texture surface is the same as the normal of the surface that the texture is currently displayed on.

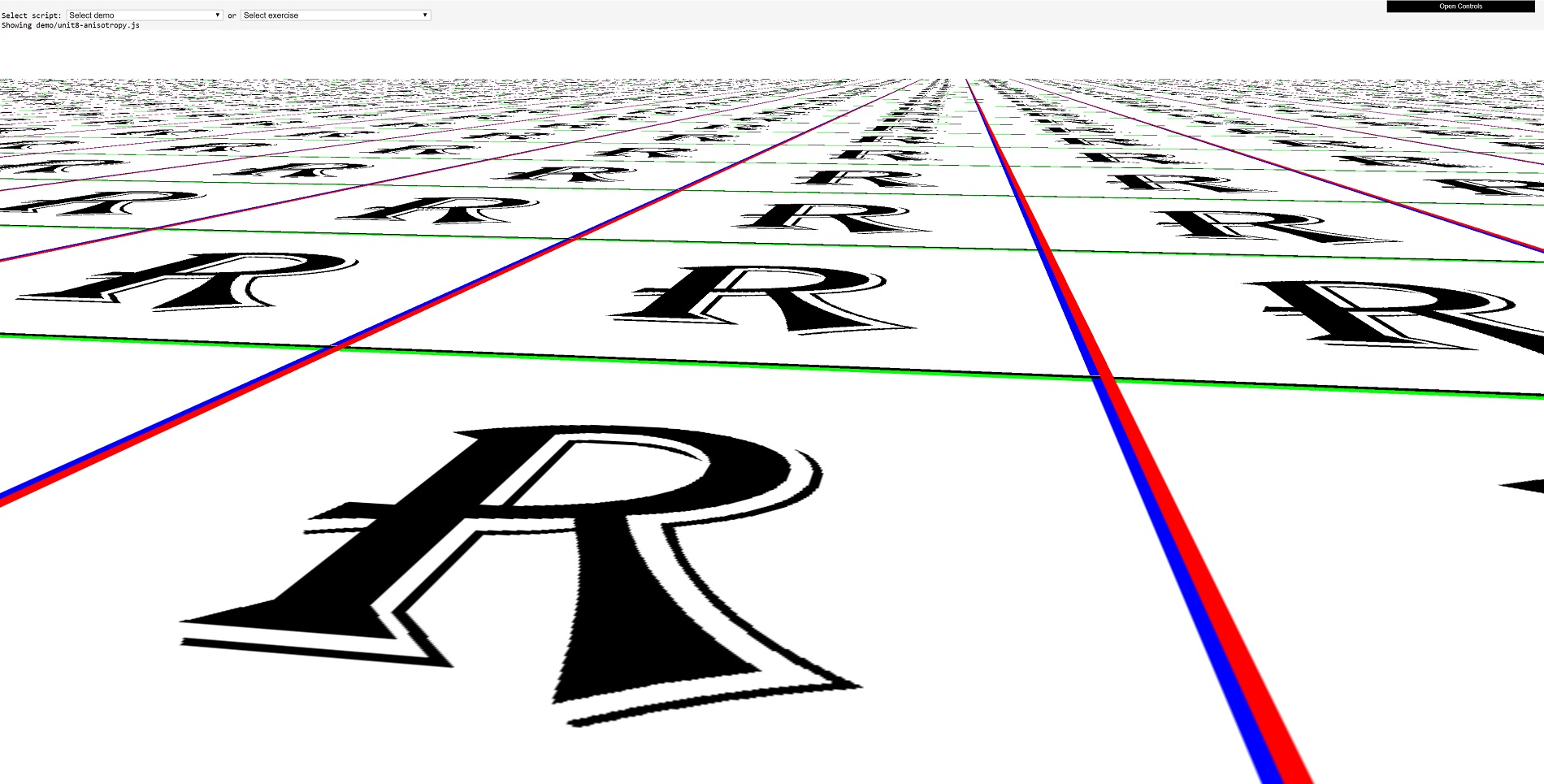

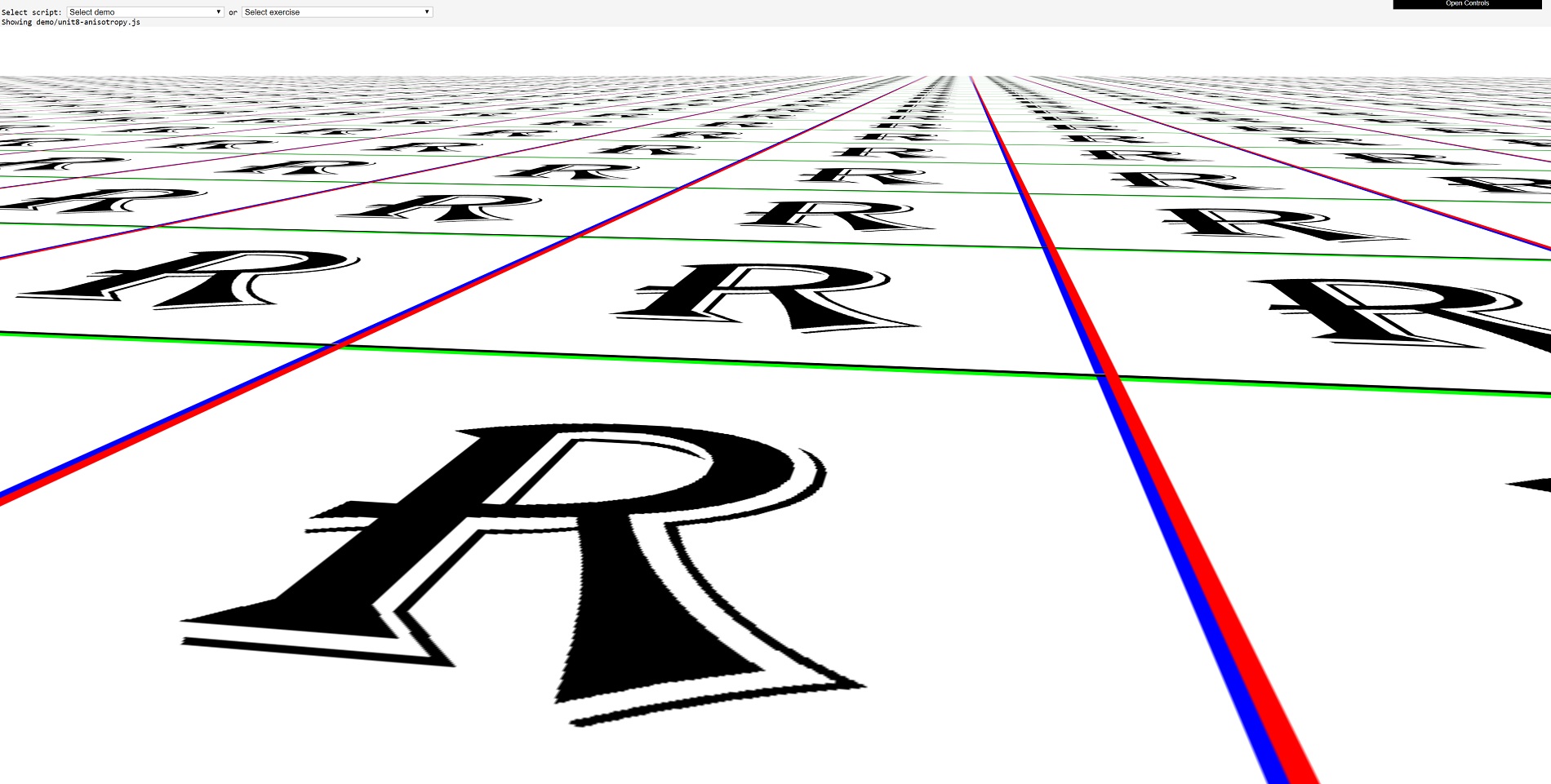

Then having also few or too many texels, and having texels at an angle, require an additional process called texture filtering. If you don't employ this process, so this is what you go:

Here we've replaced the crate texture with a letter R texture, to show more clearly how much of a mess information technology tin can become without texture filtering!

Graphics APIs such as Direct3D, OpenGL, and Vulkan all offer the same range filtering types only utilize different names for them. Essentially, though, they all become like this:

- Nearest point sampling

- Linear texture filtering

- Anisotropic texture filtering

To all intents and purposes, nearest betoken sampling isn't filtering - this is considering all that happens is the nearest texel to the pixel requiring the texture is sampled (i.e. copied from memory) and and then composite with the pixel'southward original color.

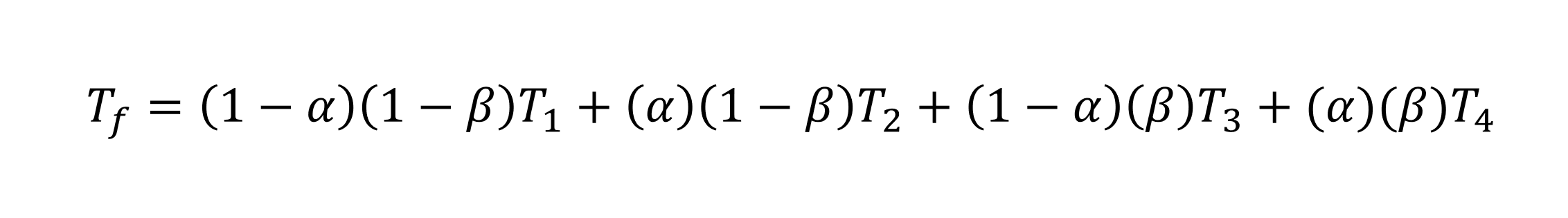

Here comes linear filtering to the rescue. The required u,v coordinates for the texel are sent off to the hardware for sampling, merely instead of taking the very nearest texel to those coordinates, the sampler takes four texels. These are direct above, below, left, and correct of the one selected by using nearest point sampling.

These 4 texels are then composite together using a weighted formula. In Vulkan, for instance, the formula is:

The T refers to texel color, where f is for the filtered one and 1 through to 4 are the four sampled texels. The values for blastoff and beta come up from how far away the signal defined past the u,v coordinates is from the eye of the texture.

Fortunately for everyone involved in 3D games, whether playing them or making them, this happens automatically in the graphics processing bit. In fact, this is what the TMU chip in the 3dfx Voodoo did: sampled 4 texels and then composite them together. Direct3D oddly calls this bilinear filtering, but since the time of Convulse and the Voodoo'southward TMU chip, graphics cards have been able to exercise bilinear filtering in only one clock cycle (provided the texture is sitting handily in nearby retentivity, of form).

Linear filtering can be used alongside mipmaps, and if you want to get really fancy with your filtering, y'all tin take four texels from a texture, then some other 4 from the side by side level of mipmap, and so blend all that lot together. And Direct3D's proper noun for this? Trilinear filtering. What's tri nigh this process? Your judge is as good every bit ours...

The terminal filtering method to mention is called anisotropic. This is actually an adjustment to the process done in bilinear or trilinear filtering. It initially involves a adding of the caste of anisotropy of the primitive'south surface (and information technology'due south surprisingly circuitous, as well) -- this value increases the primitive'southward aspect ratio alters due to its orientation:

The to a higher place image shows the same square primitive, with equal length sides; just as it rotates away from our perspective, the foursquare appears to go a rectangle, and its width increases over its height. And then the archaic on the right has a larger degree of anisotropy than those left of it (and in the instance of the square, the degree is exactly zero).

Many of today'due south 3D games allow you to enable anisotropic filtering and and then adjust the level of it (1x through to 16x), but what does that actually change? The setting controls the maximum number of boosted texel samples that are taken per original linear sampling. For example, let's say the game is set to employ 8x anisotropic bilinear filtering. This means that instead of only fetching iv texels values, it will fetch 32 values.

The divergence the use of anisotropic filtering can make is articulate to come across:

Just scroll back up a little and compare nearest point sampling to maxed out 16x anisotropic trilinear filtering. And then smooth, it'south about delicious!

Just there must exist a toll to pay for all this lovely buttery texture deliciousness and it's surely functioning: all maxed out, anisotropic trilinear filtering will be fetching 128 samples from a texture, for each pixel being rendered. For fifty-fifty the very all-time of the latest GPUs, that just can't be done in a unmarried clock cycle.

If we take something similar AMD'southward Radeon RX 5700 XT, each one of the texturing units inside the processor can burn down off 32 texel addresses in one clock bike, then load 32 texel values from memory (each 32 $.25 in size) in another clock cycle, so blend 4 of them together in one more tick. So, for 128 texel samples composite into ane, that requires at least 16 clock cycles.

At present the base clock rate of a 5700 XT is 1605 MHz, so sixteen cycles takes a mere 10 nanoseconds. Doing this for every pixel in a 4K frame, using just ane texture unit, would however simply take 70 milliseconds. Okay, so perhaps performance isn't that much of an issue!

Even dorsum in 1996, the likes of the 3Dfx Voodoo were pretty nifty when it came to handling textures. It could max out at 1 bilinear filtered texel per clock cycle, and with the TMU fleck rocking along at 50 MHz, that meant 50 million texels could be churned out, every second. A game running at 800 10 600 and thirty fps, would but need 14 million bilinear filtered texels per second.

However, this all assumes that the textures are in nearby memory and that but one texel is mapped to each pixel. Twenty years ago, the idea of needing to apply multiple textures to a primitive was about completely conflicting, but information technology'due south commonplace at present. Let's have a look at why this change came about.

Lighting the fashion to spectacular images

To help understand how texturing became and then of import, take a look at this scene from Quake:

Information technology'south a dark prototype, that was the nature of the game, only you lot can see that the darkness isn't the same everywhere - patches of the walls and floor are brighter than others, to give a sense of the overall lighting in that area.

The primitives making upwardly the sides and ground all have the same texture applied to them, just there is a second one, chosen a light map, that is blended with the texel values earlier they're mapped to the frame pixels. In the days of Quake, light maps were pre-calculated and made past the game engine, and used to generate static and dynamic light levels.

The reward of using them was that complex lighting calculations were washed to the textures, rather than the vertices, notably improving the appearance of a scene and for very little operation cost. It'southward obviously not perfect: every bit you tin can see on the floor, the boundary betwixt the lit areas and those in shadow is very stark.

In many means, a light map is just another texture (remember that they're all nothing more 2D information arrays), so what we're looking at here is an early on use of what became known as multitexturing. Every bit the proper noun clearly suggests, information technology'south a process where two or more textures are applied to a primitive. The use of low-cal maps in Quake was a solution to overcome the limitations of Gouraud shading, but as the capabilities of graphics cards grew, and then did the applications of multitexturing.

The 3Dfx Voodoo, like other cards of its era, was limited by how much it could exercise in one rendering pass. This is essentially a complete rendering sequence: from processing the vertices, to rasterizing the frame, and then modifying the pixels into a final frame buffer. Twenty years ago, games performed single pass rendering pretty much all of the time.

This is because processing the vertices twice, just because you wanted to apply some more textures, was too costly in terms of performance. Nosotros had to wait a couple of years afterwards the Voodoo, until the ATI Radeon and Nvidia GeForce ii graphics cards were available earlier nosotros could do multitexturing in one rendering pass.

These GPUs had more than one texture unit of measurement per pixel processing section (aka, a pipeline), so fetching a bilinear filtered texel from two separate textures was a cinch. That fabricated light mapping fifty-fifty more than popular, allowing for games to make them fully dynamic, altering the calorie-free values based on changes in the game's environment.

Only there is so much more that can exist done with multiple textures, so allow'south take a look.

It'southward normal to crash-land up the height

In this series of articles on 3D rendering, we've not addressed how the function of the GPU really fits into the whole shebang (we volition do, only not notwithstanding!). But if you get back to Part 1, and look at all of the complex work involved in vertex processing, you may retrieve that this is the hardest part of the whole sequence for the graphics processor to handle.

For a long time it was, and game programmers did everything they could to reduce this workload. That meant reaching into the bag of visual tricks and pulling off as many shortcuts and cheats every bit possible, to give the same visual appearance of using lots of vertices all over the place, but not actually use that many to begin with.

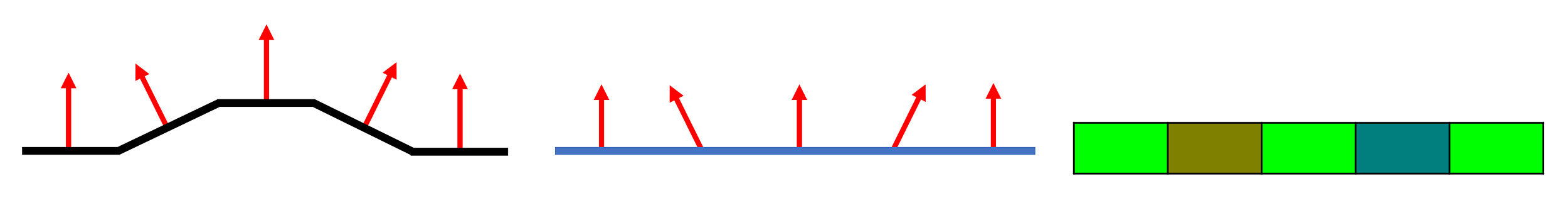

And most of these tricks involved using textures called height maps and normal maps. The two are related in that the latter can be created from the quondam, but for now, permit'due south just take a look at a technique called bump mapping.

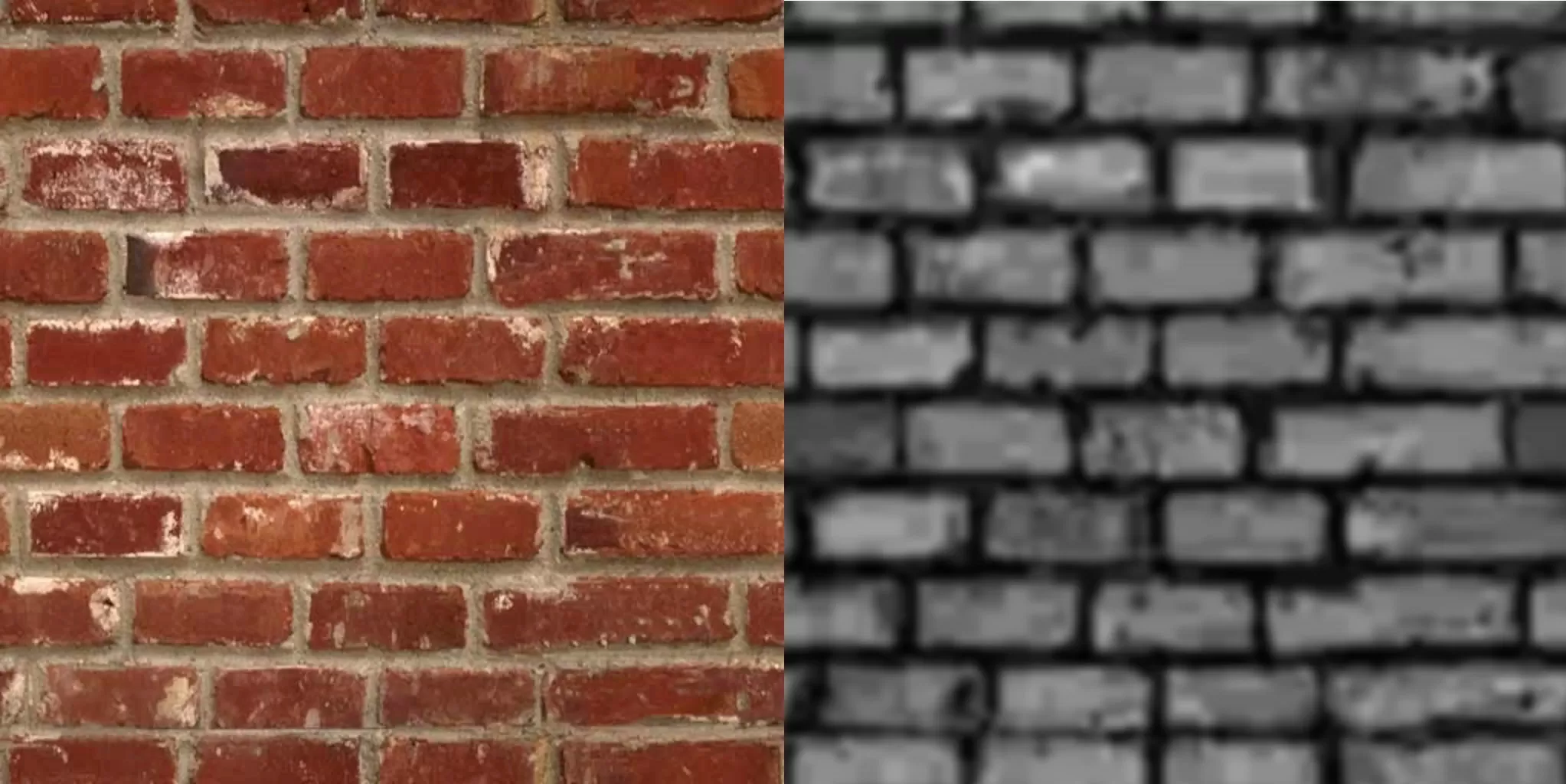

Bump mapping involves using a 2D array chosen a peak map, that looks like an odd version of the original texture. For example, in the above paradigm, there is a realistic brick texture applied to 2 flat surfaces. The texture and its height map look like this:

The colors of the height map represent the normals of the brick's surface (we covered what a normal is in Part 1 of this series of articles). When the rendering sequence reaches the point of applying the brick texture to the surface, a sequence of calculations have identify to adjust the colour of the brick texture based on the normal.

The result is that the bricks themselves wait more than 3D, even though they are however totally flat. If you lot look carefully, particularly at the edges of the bricks, you can run into the limitations of the technique: the texture looks slightly warped. Only for a quick play a trick on of adding more detail to a surface, bump mapping is very popular.

A normal map is like a summit map, except the colors of that texture are the normals themselves. In other words, a calculation to convert the height map into normals isn't required. You might wonder just how can colors exist used to represent an arrow pointing in infinite? The answer is uncomplicated: each texel has a given fix of r,yard,b values (scarlet, green, blueish) and those numbers straight stand for the x,y,z values for the normal vector.

In the higher up instance, the left diagram shows how the direction of the normals change beyond a bumpy surface. To stand for these same normals in a flat texture (center diagram), we assign a colour to them. In our case, we've used r,yard,b values of (0,255,0) for straight upwardly, and then increasing amounts of ruby-red for left, and blue for right.

Annotation that this color isn't blended with the original pixel - it simply tells the processor what management the normal is facing, and then it can properly calculate the angles between the camera, lights and the surface to be textured.

The benefits of bump and normal mapping really shine when dynamic lighting is used in the scene, and the rendering procedure calculates the effects of the calorie-free changes per pixel, rather than for each vertex. Modern games now use a stack of textures to ameliorate the quality of the magic fox being performed.

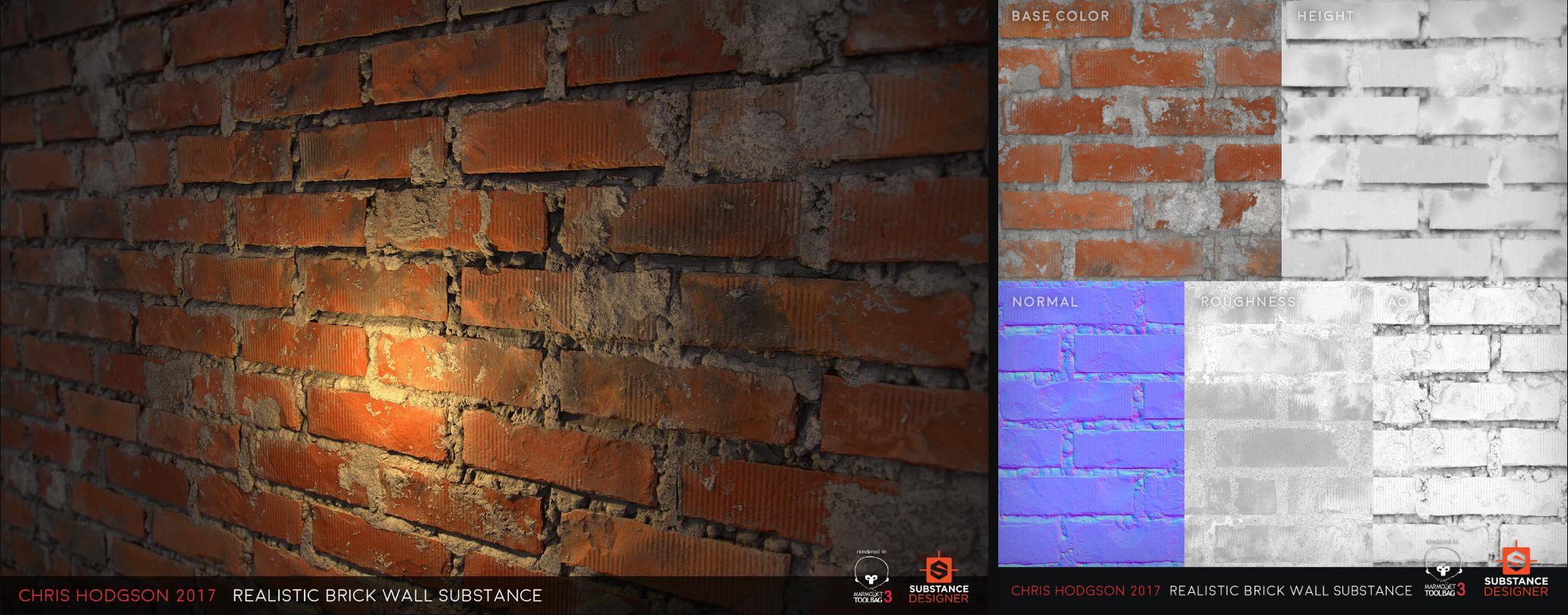

This realistic looking wall is amazingly still just a flat surface -- the details on the bricks and mortar aren't done using millions of polygons. Instead, simply 5 textures and a lot of clever math gets the job done.

The elevation map was used to generate the way that the bricks cast shadows on themselves and the normal map to simulate all of the small changes in the surface. The roughness texture was used to change how the lite reflects off the different elements of the wall (e.g. a smoothed brick reflects more consistently that rough mortar does).

The final map, labelled AO in the above image, forms part of a process called ambient occlusion: this is a technique that we'll await at in more than depth in a after article, only for now, information technology just helps to improve the realism of the shadows.

Texture mapping is crucial

Texturing is admittedly crucial to game design. Accept Warhorse Studio's 2022 release Kingdom Come: Deliverance -- a first person RPG prepare in 15th century Bohemia, an old country of mid-East Europe. The designers were keen on creating as realistic a globe every bit possible, for the given menstruation. And the best way to draw the histrion into a life hundreds of years ago, was to have the correct look for every landscape view, building, set of clothes, pilus, everyday items, and and then on.

Each unique texture in this unmarried paradigm from the game has been handcrafted by artists and their employ by the rendering engine controlled past the programmers. Some are small, with basic details, and receive little in the style of filtering or existence processed with other textures (e.g. the chicken wings).

Others are high resolution, showing lots of fine detail; they've been anisotropically filtered and the blended with normal maps and other textures -- but look at the face of the human being in the foreground. The different requirements of the texturing of each item in the scene have all been accounted for by the programmers.

All of this happens in so many games at present, because players expect greater levels of detail and realism. Textures will get larger, and more volition exist used on a surface, but the process of sampling the texels and applying them to pixels will still essentially be the same as information technology was in the days of Convulse. The best technology never dies, no affair how one-time information technology is!

Too Read

- How Many FPS Do You Need?

- AMD Navi vs. Nvidia Turing: An Compages Comparison

- Display Tech Compared: TN vs. VA vs. IPS

Source: https://www.techspot.com/article/1916-how-to-3d-rendering-texturing/

Posted by: childershompareect.blogspot.com

0 Response to "How 3D Game Rendering Works: Texturing"

Post a Comment